Introduction to Deabstraction

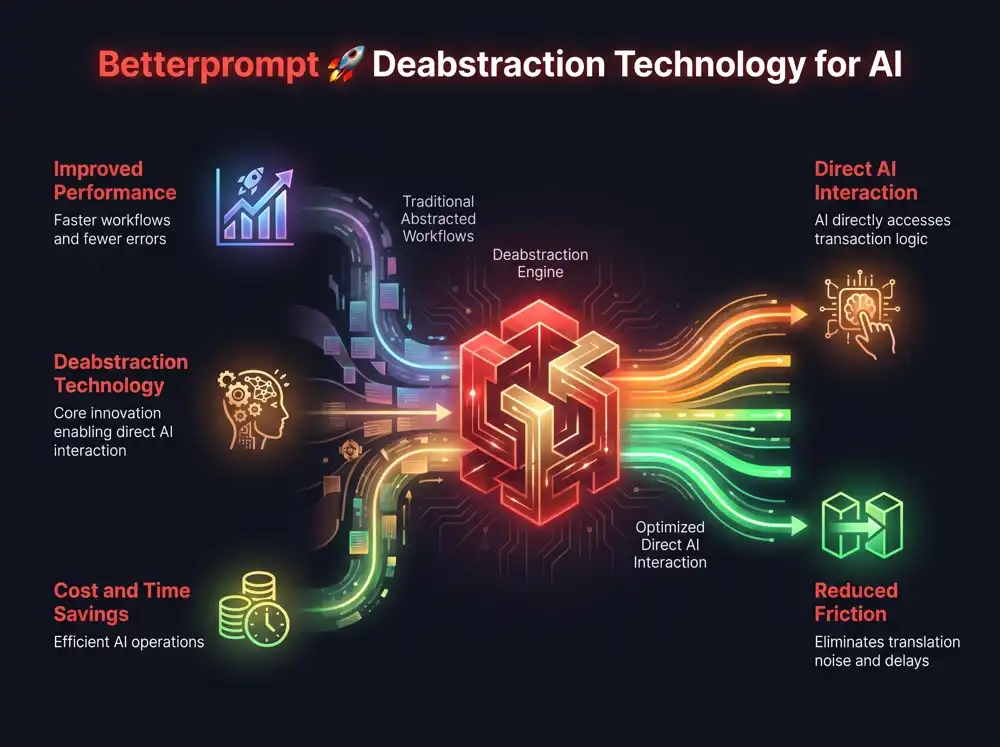

Explaining your intent to an AI can be challenging. We help you regain control and achieve superior performance by transforming your ideas into real-world outcomes. The power of deabstraction is about removing the layers of translation noise that lead to hallucinations and wasted tokens. By enabling AI to interact directly with transaction logic, we eliminate the friction that slows progress.

Why Abstraction Is Holding AI Back

Better Prompt's core philosophy is that every layer of abstraction adds translation noise. This noise results in tech debt, wasted tokens, and errors. Deabstraction allows AI models to bypass traditional graphical rendering and interact directly with underlying logic. This eliminates the friction that slows down artificial intelligence and circumvents the natural-language bottleneck.

Breaking the Chains of Human-Centric Design

In the race to build Agentic AI, developers have hit a wall of human-centric design. For decades, software has relied on abstraction layers like Content Management Systems (CMS), Graphical User Interfaces (GUI), and Application Programmable Interfaces (API) to shape the user experience.

For AI, these abstractions are a new and significant hurdle. They obscure critical data, bloat context windows with unnecessary visual formatting, and block direct execution. This is why AI can sometimes feel like it's just stochastic parroting instead of truly understanding.

To combat this, Better Prompt is set on quietly dismantling the very architecture of the modern web to build a “bare metal” AI environment through its pioneering Deabstraction Technology and a brand new Open Source project called BetterPrompt Rocket.

“We realised that treating an AI model like a human user is the fall from grace of the original design. This focus is forcing supercomputers to tap-dance through interfaces designed for eyes and thumbs. Deabstraction is about tearing down the layers and guard rails that exist solely for human cognitive limitations, giving AI models direct access to system logic.”

– Andy Futcher, Co-Founder of Better Prompt

How Does Deabstraction Work?

Models operating in a deabstracted environment complete complex, multi-step workflows with less iteration and significantly fewer errors. This is because they aren't forced to navigate standard human interfaces. By removing the human-interpretable overhead, deabstraction gives models the ability to operate at the speed of silicon, utilizing techniques like Chain-of-Thought reasoning more effectively. This leads to direct cost and time savings.

Why is Deabstraction critical for Agentic AI?

Agentic AI relies on autonomy to perform complex tasks. When AI agents must parse visual DOM elements or human-readable text meant for screens, it wastes computational power and tokens. Deabstraction provides a direct line to the transaction logic, enabling faster, cheaper, and more accurate autonomous actions.

Features and Benefits

| Reasons | Better Prompt Neutral Language |

Natural Language Prompting |

|---|---|---|

| Aligns prompts to high-value scientific training data | Yes | No |

| Saves time, saves tokens and decreases context window usage | Yes | No |

| Works with your favorite chatbot and improves your AI experience | Yes | No |

| Helps protect your privacy by filtering sensitive information | Yes | No |

| Deabstraction technology enhances model reasoning capabilities | Yes | No |

| Reduces misinterpretation with Deambiguation language filters | Yes | No |

| Locally stored prompt history is your Incognito mode AI | Yes | No |

| Reduces the risk of prompt injection for improved AI safety | Yes | No |

| Rapidly interprets your prompts to level-up your prompting skills | Yes | No |

| Advocates for you by providing insight and choice on models | Yes | No |

| Total reasons to use it? | At least 10 | Not many |

Learn more about our technology

Explore Further

Dive deeper into the concepts that power Better Prompt and the future of AI interaction.

- Prompting Techniques: Learn how to prompt better, understand chain-of-thought, and explore prompt structure and modular architecture.

- AI Concepts: Grasp key ideas like Large Language Models (LLMs), Natural Language Processing (NLP), Generative AI, and the challenge of human alignment.

- Safety & Security: Read about the risks of prompt injection, how to build layered security, and the role of a auditor-AI.

- Image Generation: Apply these principles to text-to-image models, improve adherence to your prompt, and achieve greater realism.

Frequently Asked Questions

What exactly is Deabstraction Technology?

How does Deabstraction differ from standard prompt engineering?

What are AI abstraction layers?

Can Deabstraction really reduce AI hallucinations?

Is Deabstraction safe? What about prompt injection?

How does Better Prompt's Neutral Language Engine help?

Why is bypassing the GUI so important for AI?

What is 'Agentic AI' and why does it need Deabstraction?

How can I start using these principles to get better AI results?

Does Deabstraction apply to image generation models too?